Sqoop即 SQL to Hadoop ,是一款方便的在传统型数据库与Hadoop之间进行数据迁移的工具,充分利用MapReduce并行特点以批处理的方式加快数据传输,发展至今主要演化了二大版本,Sqoop1和Sqoop2。

Sqoop工具是hadoop下连接关系型数据库和Hadoop的桥梁,支持关系型数据库和hive、hdfs,hbase之间数据的相互导入,可以使用全表导入和增量导入。

那么为什么选择Sqoop呢?

高效可控的利用资源,任务并行度,超时时间。 数据类型映射与转化,可自动进行,用户也可自定义 支持多种主流数据库,MySQL,Oracle,SQL Server,DB2等等

2.Sqoop1和Sqoop2对比的异同之处

两个不同的版本,完全不兼容 版本号划分区别,Apache版本:1.4.x(Sqoop1); 1.99.x(Sqoop2) CDH版本 : Sqoop-1.4.3-cdh4(Sqoop1) ; Sqoop2-1.99.2-cdh4.5.0 (Sqoop2)Sqoop2比Sqoop1的改进 引入Sqoop server,集中化管理connector等 多种访问方式:CLI,Web UI,REST API 引入基于角色的安全机制

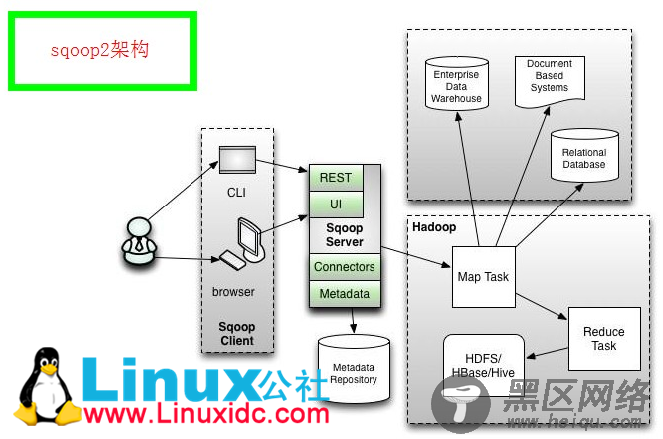

3.Sqoop1与Sqoop2的架构图

Sqoop架构图1

Sqoop架构图2

通过Sqoop实现Mysql / Oracle 与HDFS / Hbase互导数据

Hadoop Oozie学习笔记 Oozie不支持Sqoop问题解决

Hadoop生态系统搭建(hadoop hive hbase zookeeper oozie Sqoop)

Hadoop学习全程记录——使用Sqoop将MySQL中数据导入到Hive中

4.Sqoop1与Sqoop2的优缺点

比较

Sqoop1

Sqoop2

架构

仅仅使用一个Sqoop客户端

引入了Sqoop server集中化管理connector,以及rest api,web,UI,并引入权限安全机制

部署

部署简单,安装需要root权限,connector必须符合JDBC模型

架构稍复杂,配置部署更繁琐

使用

命令行方式容易出错,格式紧耦合,无法支持所有数据类型,安全机制不够完善,例如密码暴漏

多种交互方式,命令行,web UI,rest API,conncetor集中化管理,所有的链接安装在Sqoop server上,完善权限管理机制,connector规范化,仅仅负责数据的读写

5.Sqoop1的安装部署

5.0 安装环境

hadoop:hadoop-2.3.0-cdh5.1.2

sqoop:sqoop-1.4.4-cdh5.1.2

5.1 下载安装包及解压

tar -zxvf sqoop-1.4.4-cdh5.1.2.tar.gz

ln -s sqoop-1.4.4-cdh5.1.2 sqoop

5.2 配置环境变量和配置文件

cd sqoop/conf/

cat sqoop-env-template.sh >> sqoop-env.sh

vi sqoop-env.sh

在sqoop-env.sh中添加如下代码

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

#

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# included in all the hadoop scripts with source command

# should not be executable directly

# also should not be passed any arguments, since we need original $*

# Set Hadoop-specific environment variables here.

#Set path to where bin/hadoop is available

export HADOOP_COMMON_HOME=/home/hadoop/hadoop

#Set path to where hadoop-*-core.jar is available

export HADOOP_MAPRED_HOME=/home/hadoop/hadoop

#set the path to where bin/hbase is available

export HBASE_HOME=/home/hadoop/hbase

#Set the path to where bin/hive is available

export HIVE_HOME=/home/hadoop/hive

#Set the path for where zookeper config dir is

export ZOOCFGDIR=/home/hadoop/zookeeper

该配置文件中只有HADOOP_COMMON_HOME的配置是必须的 另外关于hbase和hive的配置 如果用到需要配置 不用的话就不用配置